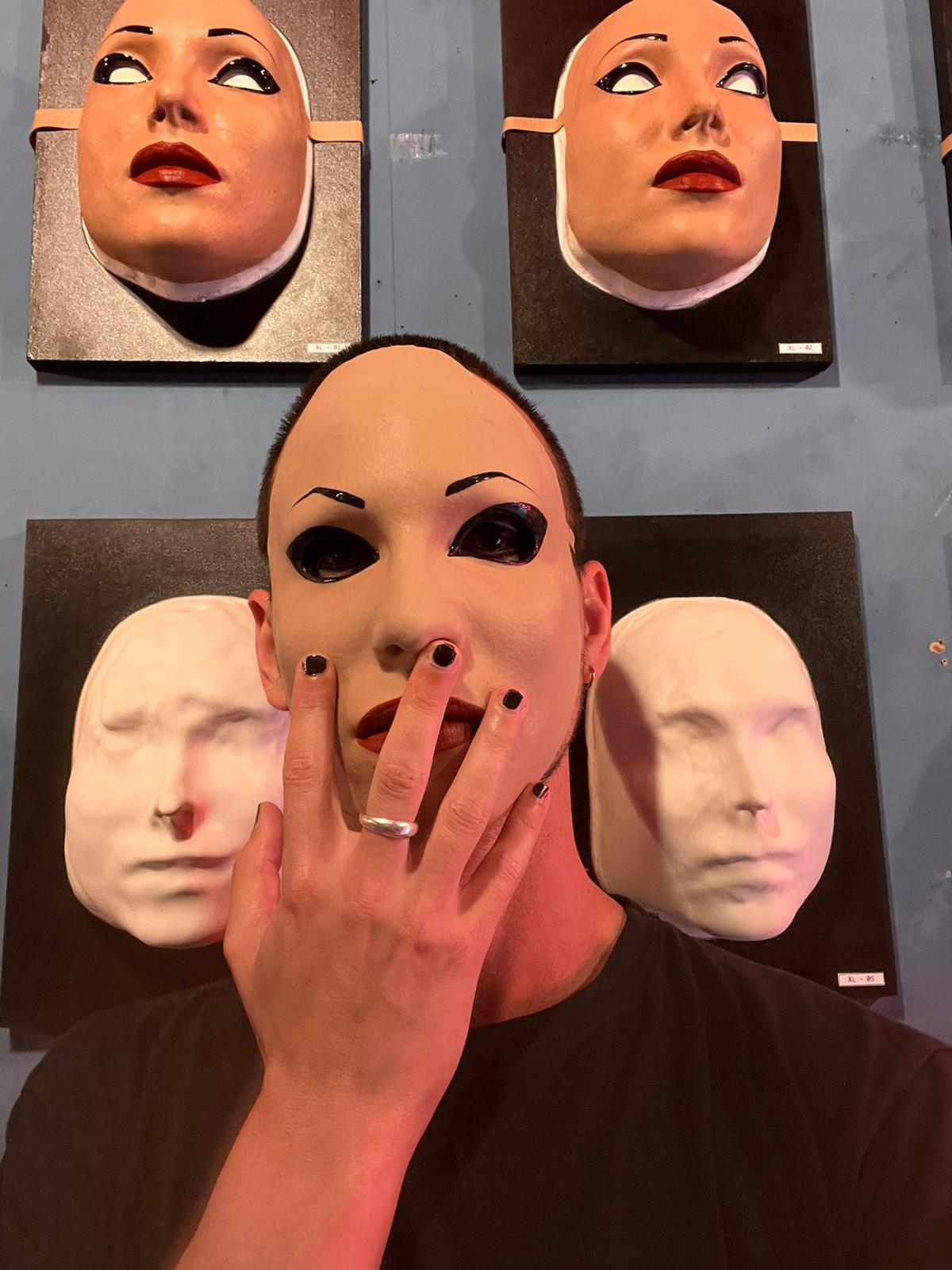

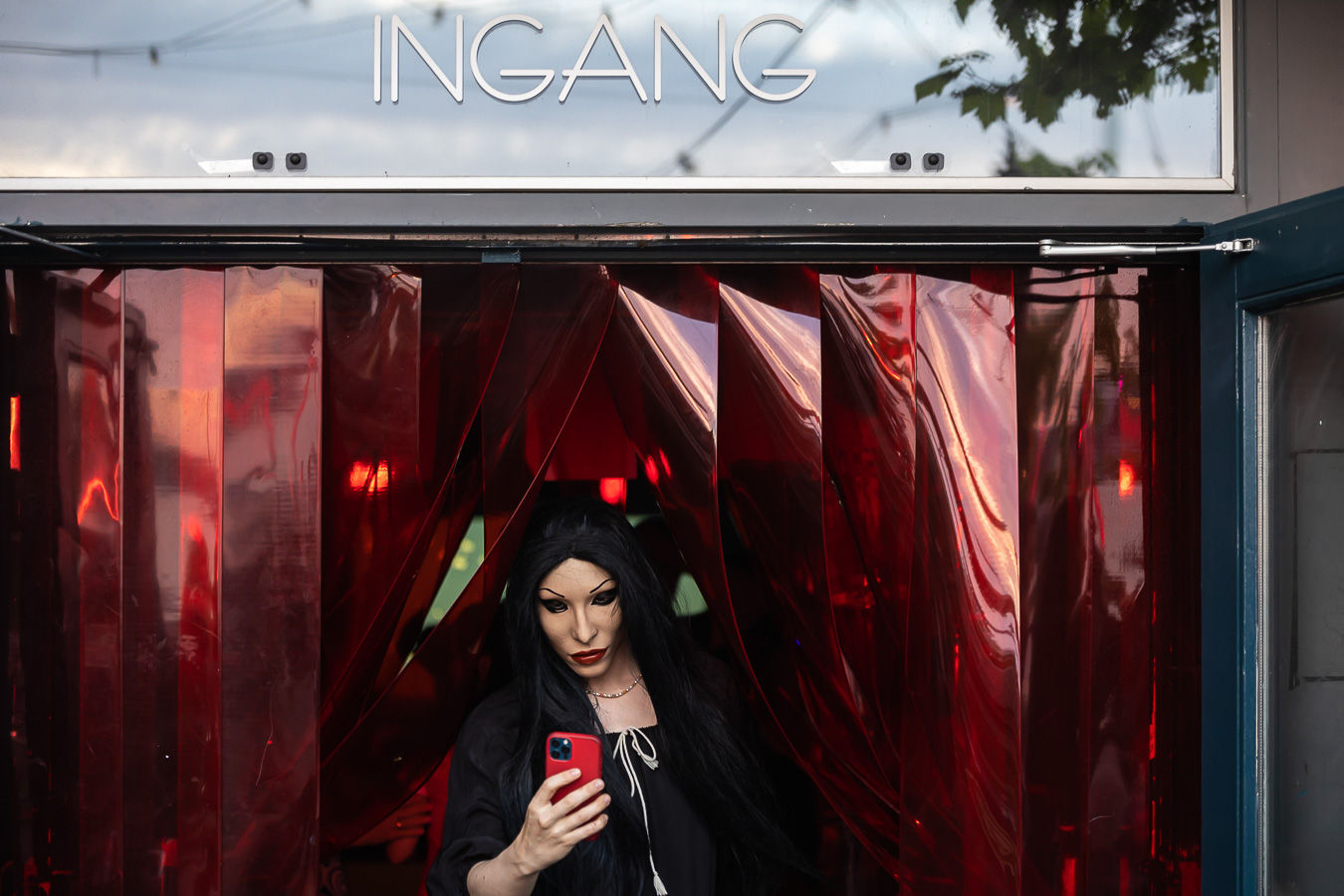

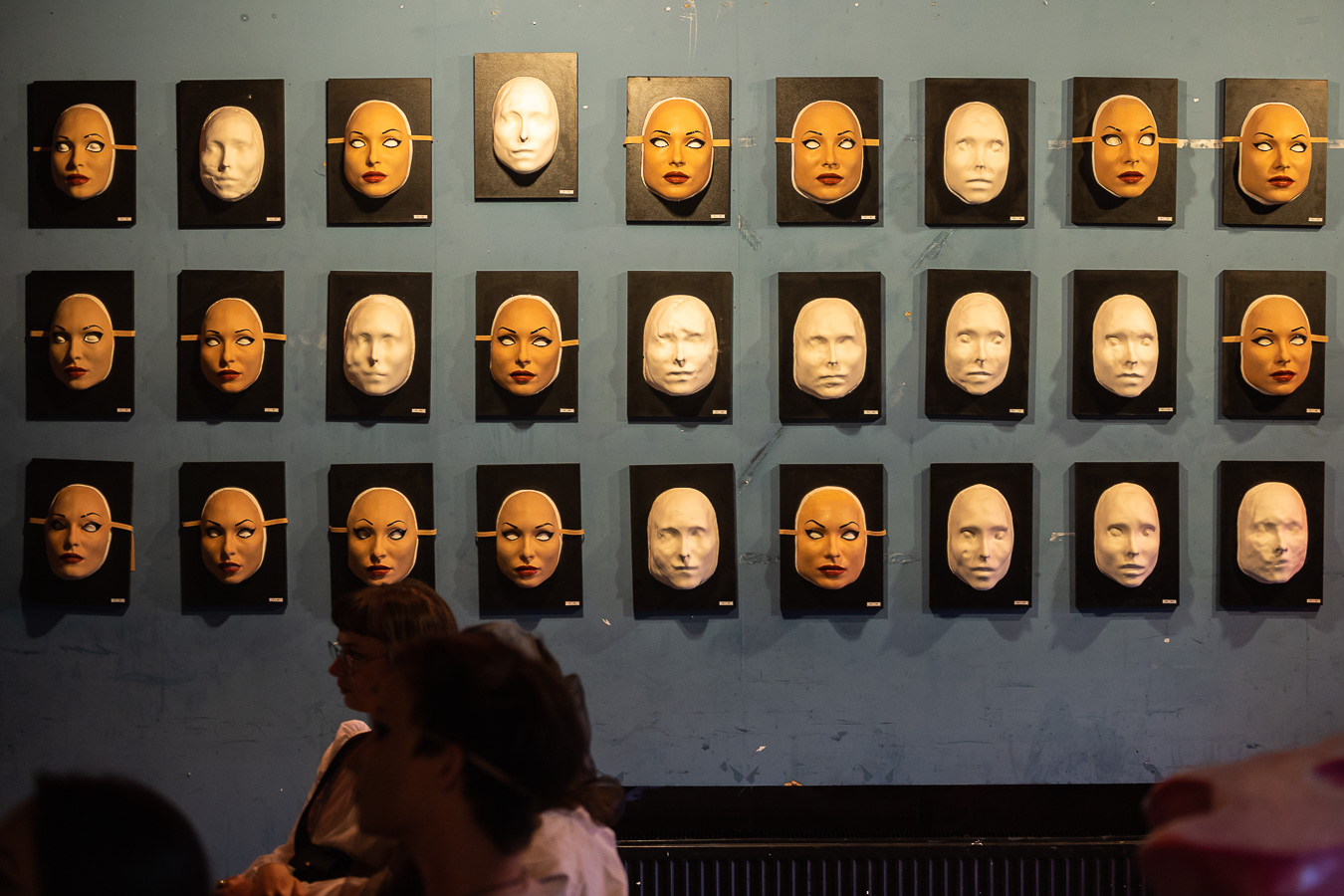

The kick-off of the MyFACE project was executed in the summer of 2022, collecting signatures for the Reclaim Your Face campaign initiated by European Digital Rights (EDRi) in Brussels and by privacy movement Bits of Freedom in The Netherlands, a European Citizen Initiative to ban biometric mass surveillance practices. MyFACE walks the line between activism in public space and research regarding the relations between identity, AI and the weaponisation of biometric data. The first performance happened on June 2022 featuring 31 masks at SEXYLAND World.

The performance involved a group of performers who ventured into the city of Amsterdam, all wearing silicone masks. Some masks appeared identical to my face, while others included alterations and distortions. The performers were assigned a specific route through Amsterdam. Some of the performers travelled by public transport, others walked or took bicycles. Some performers stopped intermittently at shops or cafés. On their assigned route, the participants shot videos and pictures with their mobile phones. This content was swiftly uploaded to their personal social media accounts. By fooling the system, algorithms on social media identified me as an individual subject appearing, paradoxically, in multiple places at once, creating a glitch of fracture and multiplicity within the otherwise individualised subjective node interpellated as “Laura A Dima”.

Related shows:

-

05.05.2024: Knocked Out until 23.06.2024- Wunderkamer

- Group Exhibition

-

09.02.2024: Graukunst: Fetish until 11.02.2024- Grey Space in the Middle

- Group show

-

15.01.2024: Big Brother Awards- De Brakke Grond

- Group Show/ Awards event

-

29.09.2023: MURF/MURW 2023 until 01.10.2023- Theater de Nieuwe Vorst

- Group exhibition

-

05.05.2023: L’AGE D’OR until 07.05.2023- The Crypt Gallery

- Group Exhibition

-

15.06.2022: myFACE- Sexyland World

- performance